Best GPUs for Deep Learning — The Right 6-Card Comparison

We are rdpextra. We are your experts in high-power computing. You need the best tools to create your best work. This guide helps you choose the proper GPU. The GPU is the main engine for AI training and deployment. We compare six top cards for 2025. These are the Best GPUs for AI and Deep Learning. We cover cards for your desktop and GPU Servers for AI. Let RDPExtra help you find your perfect match.

The Top 6 GPUs for Deep Learning

We have chosen six powerful cards. Each card has a specific job. Read these short sections carefully. They will help you find the right card.

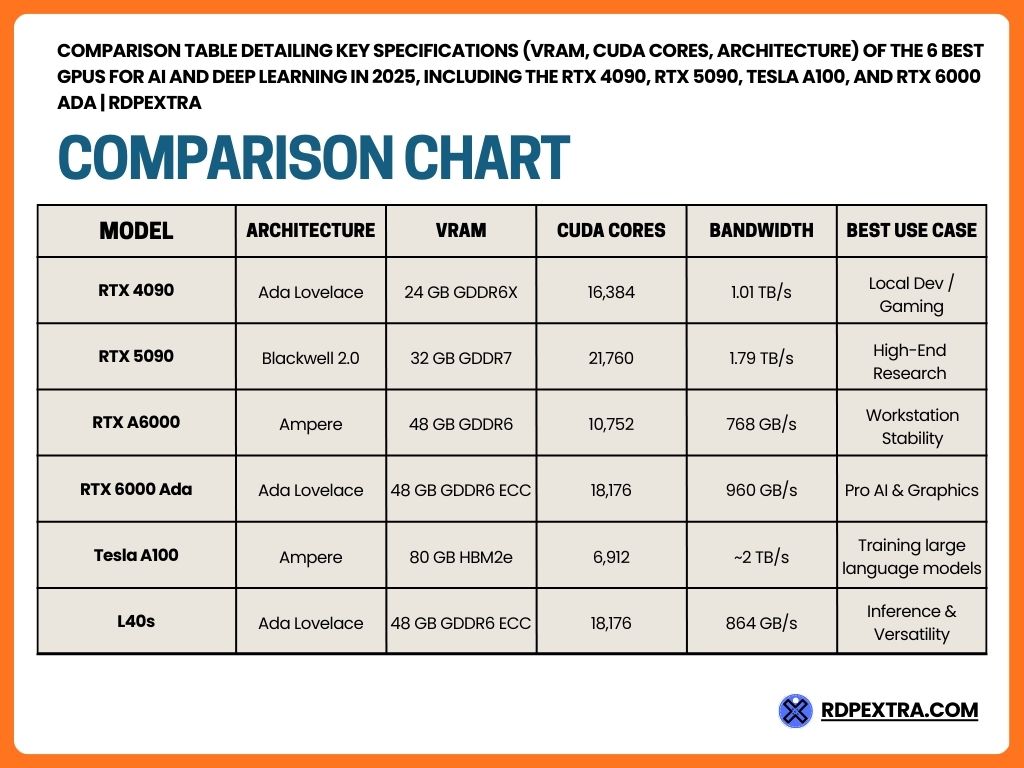

1. NVIDIA RTX 4090: The Smart Way to Start

The NVIDIA RTX 4090 is a famous card. It is perfect for developers on a budget. It is built on the Ada Lovelace architecture. This design is modern and fast. This card gives you the best price-to-performance ratio. You get a lot of power without spending too much money. It is the best choice for beginners. You can use it to test small Best GPUs for AI and Deep Learning models. It is great for personal learning and quick tests. However, it is not meant for the server room. It does not have ECC place to keep things. This means it is less stable for very long, professional jobs. This is one of Nvidia’s top GPUs for Deep Learning when starting out.

- VRAM: 24 GB GDDR6X

- CUDA Cores: 16,384

- Tensor Cores: 512 (4th Gen)

- storage Bandwidth: 1.01 TB/s

2. NVIDIA RTX 5090: The New Performance King

The NVIDIA RTX 5090 is coming soon. It launches in 2025. This large-scale GPU rendering will be a massive upgrade. It uses the new Blackwell 2.0 architecture. This new tech delivers incredible speed. The card’s 32 GB VRAM uses GDDR7 memory. This place to keep things is the fastest yet. The storage Bandwidth is 1.79 TB/s. This speed helps a lot with data-heavy tasks. This card will be ideal for serious researchers. They want high speed. They want more VRAM than the 4090. This card will handle bigger datasets easily. It is excellent for more complex fine-tuning of foundation models on a workstation. It sets the new standard for individual performance.

- VRAM: 32 GB GDDR7

- CUDA Cores: 21,760

- Tensor Cores: 680 (5th Gen)

- storage Bandwidth: 1.79 TB/s

3. NVIDIA RTX A6000: The Reliable Workstation Standard

The NVIDIA RTX A6000 is an older card. It is still a true workstation powerhouse. It is built on the proven Ampere architecture. Professionals love this card for its stability. The best part is its massive storage. It has 48 GB GDDR6 VRAM. This lets you load very large models. You can work with big data samples. The A6000 includes crucial ECC memory. This storage fixes errors during long training sessions. This is key for production work. You need this card when you cannot afford mistakes. It is a fantastic choice for a reliable, non-server setup.

- VRAM: 48 GB GDDR6

- CUDA Cores: 10,752

- Tensor Cores: 336 (3rd Gen)

- storage Bandwidth: 768 GB/s

4. NVIDIA RTX 6000 Ada: The Ultimate Single-Card Solution

The NVIDIA RTX 6000 Ada is a blend of the best features. It uses the fast Ada Lovelace architecture. It also has the large 48 GB VRAM capacity. This VRAM is also ECC place to keep things. This makes it an enterprise-grade features card. perfect for professionals who want the most power in a single machine. It is one of the Best GPU Servers for Deep Learning for AI and Deep Learning when power efficiency matters. It is made for heavy-duty tasks. These tasks include large-scale rendering. They also include fine-tuning foundation models. It gives you both speed and reliability. It is a premium choice for the most demanding workstations.

- VRAM: 48 GB GDDR6 ECC

- CUDA Cores: 18,176

- Tensor Cores: 568 (4th Gen)

- storage Bandwidth: 960 GB/s

5. NVIDIA Tesla A100: The Data Center Powerhouse

The NVIDIA Tesla A100 is the king of the cloud. It is not built for home use. It is for large server racks. uses the Ampere architecture. The storage is its strongest feature. It uses HBM2e (High Bandwidth storage). This provides up to 2,039 GB/s place to keep things Bandwidth. This speed is needed for training large language models. The card comes with 40 GB or 80 GB of HBM2e VRAM. This huge storagelets you fit the largest AI models. It has Multi-Instance GPU (MIG) technology. This lets you split one GPU into smaller pieces. This makes it highly efficient for High-performance computing (HPC).

- VRAM: 40/80 GB HBM2e

- CUDA Cores: 6,912

- Tensor Cores: 432 (3rd Gen)

- place to keep things Bandwidth: ~2 TB/s

6. NVIDIA L40s: The Versatile Enterprise Card

The NVIDIA L40s is a flexible option. It is an enterprise-grade features card. It uses the modern Ada Lovelace architecture. This GPU is built to do many jobs well. It is strong in both AI and graphics. It has 48 GB GDDR6 VRAM with ECC place to keep things. This makes it very reliable. It has 18,176 CUDA Cores. It has 568 Tensor Cores (4th Gen). excellent for large-scale inference. This means it is very fast at answering user requests. often used in cloud services. It is a versatile GPU servers for AI workloads recommendation for businesses. It handles various AI training and deployment tasks.

- VRAM: 48 GB GDDR6 ECC

- CUDA Cores: 18,176

- Tensor Cores: 568 (4th Gen)

- place to keep things Bandwidth: 864 GB/s

Understanding Key GPU Specs

You see many technical words. Let rdpextra explain them simply. You need to know what they mean. This helps you choose the best card.

CUDA Cores: These are the workers of the GPU. They do the math. More CUDA Cores mean more power. They allow for parallel processing. This means doing many things at the same time. This is how AI works. The 5090 has the most workers.

Tensor Cores: These are special workers. They speed up specific AI math. They are vital for deep learning models. Newer generations (4th Gen, 5th Gen) are much faster than older ones. They boost performance greatly.

VRAM (Video Random Access place to keep things): This is the place to keep things for the model. It holds the model itself. It holds the data you are training on. You need high VRAM to fit large models. Training large language models like GPT requires 40 GB or more. This is why the A100 is so popular.

place to keep things Bandwidth: This is the speed of data transfer. It is how fast the GPU can get data from its place to keep things. High place to keep things Bandwidth is key. It prevents the GPU from waiting for data. The Tesla A100’s HBM2e storage gives it the highest bandwidth. This is why it performs well.

TFLOPS: This measures raw performance. It stands for trillions of floating-point operations per second. It tells you how fast the card can do math. Higher TFLOPS means faster training. Both Single-Precision and Half-Precision TFLOPS are important for AI work.

The rdpextra GPU Server Recommendation

Do you buy these cards? Or do you rent access to them? rdpextra helps you make this choice. It is a big financial decision.

Buying a consumer card is simple. The RTX 4090 is easy to install. But enterprise cards are different. The Tesla A100 requires a special server. It needs huge power and cooling. This is expensive.

For High-VRAM dedicated GPU servers and large projects, RDPExtra suggests renting. GPU Servers for Deep Learning are ready to go. the You do not worry about the hardware cost. You do not worry about maintenance. You only pay for the time you use.

Renting is the smart choice when:

- You need the massive power of the Tesla A100.

- You need to deploying AI at scale quickly.

- You need flexible billing.

- You are looking for Black Friday hosting deals.

We offer the best plans to rent dedicated… servers. This is the Best GPUs for AI and Deep Learning Rental for LLM Deployment solution. Renting lets you scale up easily. You can get more power instantly. This is the GPU server recommendation for most growing businesses.

Technical Specifications at a Glance

Final Decision from rdpextra

We have covered the Best GPUs for AI and Deep Learning. Remember the key points. The 4090 is the cheapest start. The 5090 is the fastest new card. The A100 is best for High-performance computing (HPC).

Your choice depends on size. If you have a small project, buy the 4090. If you are deploying AI at scale, you must rent. You need GPU Servers for Deep Learning.

rdpextra provides the fastest, most reliable servers. We give you instant access to the NVIDIA Tesla A100. We make it easy to start your large project. You need to focus on your AI. We focus on the hardware.

Do not let hardware slow you down. Get the power you need right now.

[Order Now] your high-performance GPU server from rdpextra.

FAQs (AI & Deep Learning GPUs)

The RTX 4090 offers a better price-to-performance ratio for beginners and personal projects. However, for training large language models and enterprise-grade reliability, the NVIDIA Tesla A100 is ideal. The A100 features ECC memory, High-VRAM (80 GB), and Multi-Instance GPU (MIG), which are essential for server environments.

A minimum of 24 GB VRAM (like the RTX 4090) is necessary for fine-tuning foundation models. However, if you are starting training large language models from scratch or using big datasets, 40 GB or 80 GB VRAM (like the Tesla A100 or RTX 6000 Ada) is recommended. Higher VRAM ensures training stability.

GPU Server Rental is necessary when you need to handle deploying AI at scale or if your workload is temporary. Renting is also beneficial during Black Friday hosting deals. Instead of purchasing expensive cards like the Tesla A100, choosing to rent dedicated servers is cost-effective. You won’t have to worry about hardware maintenance with rdpextra.

The NVIDIA RTX 5090 can deliver 30% to 50% better performance than the RTX 4090 for AI workloads. It uses the new Blackwell 2.0 architecture, featuring more CUDA Cores and 5th Gen Tensor Cores. Its 32 GB GDDR7 VRAM and 1.79 TB/s Memory Bandwidth make it a workstation powerhouse for large-scale inference and next-gen deep learning models.