RDP for AI: VPS vs RDP Model Training Hosting Guide

The world of Artificial Intelligence is moving at such a lightning-fast pace that every developer and researcher today is faced with a single, crucial question: “Where should I train my model?” If you are working on AI, Machine Learning, or Large Language Models (LLMs), you already know that running these heavy workloads on a local system is almost impossible—unless you have a hardware setup worth thousands of dollars rdp-for-ai.

This is where Remote Desktop Protocol (RDP) and Virtual Private Servers (VPS) enter the frame. However, users are often confused about which one fits their specific AI tasks. Do you need a graphical interface (RDP), or an isolated, high-performance environment to run your scripts (VPS)?

I am part of the RDPExtra team, and over the last 5 years, we have helped thousands of developers navigate their scaling journeys. In this blog, we will analyse both technologies through an AI-focused lens so you can start your next project without any technical bottlenecks.

Understanding the Basics: AI Hosting Crossover

First, let’s understand why hosting is so vital for AI workloads. AI training and VPS LLM inference require high-speed computation that only high-end GPUs—such as the NVIDIA RTX 4090 or the RTX 6000 Ada—can handle efficiently.

The “AI + Hosting Crossover” represents the intersection where cloud computing meets artificial intelligence. Today, people aren’t just looking for “hosting”; they are searching for “AI-ready infrastructure” that can handle billions of parameters without breaking a sweat.

1. RDP for AI: The Visual Powerhouse

When we talk about RDP for AI, we are essentially talking about a Windows-based environment that gives you a “Monitor” experience remotely.

- GUI Access: If you are a beginner or using tools that require a Graphical User Interface (GUI)—such as Anaconda Navigator, Jupyter Notebook GUI, or Stable Diffusion WebUI—RDP is your best bet.

- Easy Setup: You don’t need to be intimidated by the Linux terminal. You simply log in, and it feels exactly like using your personal computer.

- RDP for Machine Learning: This is perfect for researchers who need to perform frequent visualisations, check graphs in real-time, and manage files visually.

2. VPS for AI: The Scalable Engine

On the other hand, VPS GPU hosting is designed for workloads at production scale.

- Dedicated Resources: In a VPS, you get a dedicated slice of CPU, RAM, and—most importantly—the GPU. This significantly reduces the risk of “Resource Contention.”

- LLM Inference: If you want to deploy models like Llama 3 or Mistral to build an API, VPS LLM inference is the more stable and secure option.

- Automation: Here, you can run scripts 24/7 in the background without the unnecessary overhead of a GUI.

Deep Dive: Performance Comparison

In the realm of AI training, VRAM plays the most critical role. If you are training a model, your data must fit into the GPU’s memory to avoid massive slowdowns.

Performance & Latency

Because RDP maintains a GUI layer, a small portion of system resources is constantly consumed to render the desktop. However, with an AI cloud VPS, you interact directly with the OS core (usually Linux through SSH). This ensures that every bit of performance is dedicated solely to your AI model.

Scaling Capability

AI models are constantly growing. Today, you might be training a 7B parameter model; tomorrow, you might need a 70B model. In a VPS environment, scaling—such as upgrading RAM or adding more GPU power—is seamless. At RDPExtra, we have observed users starting with a small VPS and shifting to high-tier GPU servers as their datasets expand.

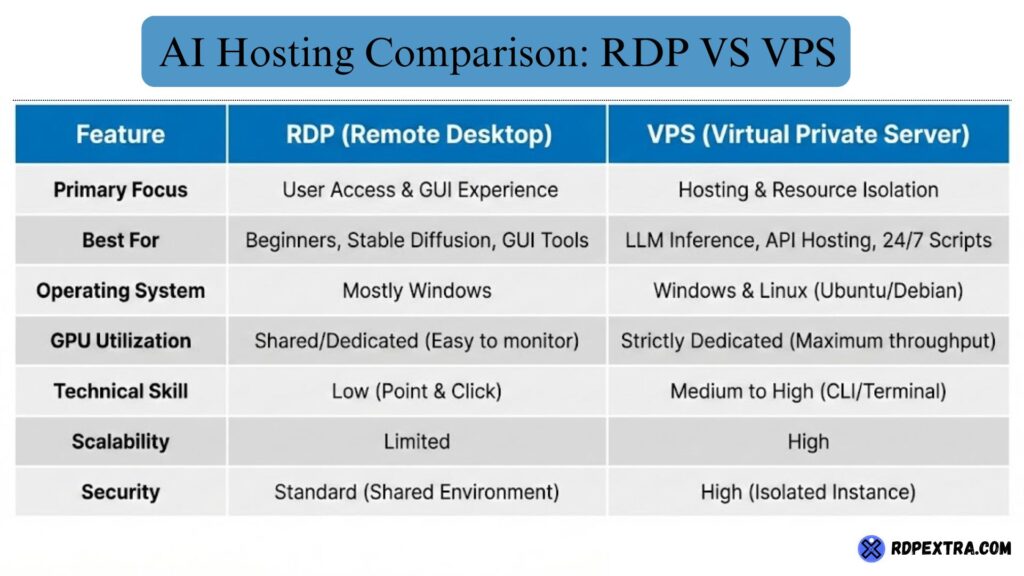

Comparison Table: RDP vs. VPS for AI Tasks

To help AI developers make an informed decision, we have created this simple comparison table:

RDPExtra’s Expertise: Why Hardware Matters

In my 5+ years of experience in technical writing and hosting, I’ve noticed one thing—users don’t just want “cheap”; they want “reliable.” AI model training isn’t a 5-minute task; it can take hours or even days.

At RDPExtra, we have optimised our servers specifically for AI workloads. We don’t just provide a server; we provide trust:

- High-End Hardware: We utilise beast GPUs like the NVIDIA RTX 4090 and 6000 Ada. The 4090’s 24GB VRAM is excellent for consumer-level tasks, while the 6000 Ada’s 48GB VRAM is the gold standard for large-scale models.

- Uptime Guarantee: If AI training stops midway, it results in a massive loss of time and progress. That’s why our focus is on redundancy and robust power backups.

- Customer Support: We understand that setting up an AI environment (CUDA drivers, PyTorch installation, etc.) can be difficult. Our team is always ready to support you through the technical hurdles.

The “Crossover” Strategy: Which one should YOU choose?

Now we come to the main question: What should you choose?

Choose RDP if:

- You are a student or learner experimenting with AI models for the first time.

- You need to use image generation tools like Stable Diffusion that require a GUI.

- You want to avoid complex Linux terminal commands.

- Your project is short-term, and you need instant, familiar access.

Choose VPS if:

- You are a startup or a developer building a product (like a Chatbot).

- You require VPS GPU hosting where performance remains consistent and dedicated.

- You are training long-term machine learning models where root access and deep customisation are mandatory.

- You need to provide API access to multiple users.

Benefits of Choosing the Right AI Hosting

Making the right choice doesn’t just save money; it saves your time and energy. When you use RDPExtra platforms, you benefit from:

- Reduced Latency: Our servers are connected to high-speed internet backbones, making dataset uploads and downloads incredibly fast.

- Cost Efficiency: You don’t need to spend thousands on hardware. You can rent high-end power on a “Pay as you go” model.

- Future Proofing: We constantly update our lineup with the latest hardware (like H100S or next-gen RTX series), ensuring your work never becomes obsolete.

Conclusion: Future of AI Hosting

By 2026, AI models will become even heavier and more complex. AI server hosting is no longer a luxury; it has become a necessity for every developer. Whether you choose RDP for machine learning or a VPS for LLM inference, your main goal should be stability and performance.

At RDPExtra, we have made the infrastructure so simple that you can focus entirely on your code, while we take care of the hardware.

In AI training, a one-day delay means falling behind the competition. Are you ready to supercharge your models?

Visit RDPExtra now and select your perfect GPU VPS or RDP plan. Our plans are specifically designed with AI/ML developers in mind.

🚀 [Explore AI Hosting Plans at RDPExtra]

Frequently Asked Questions About RDP vs VPS for AI

RDP is suitable for learning, testing, and running small AI models, especially when you need a graphical interface. However, for long training sessions, large datasets, or GPU-intensive tasks, a VPS provides better performance, stability, and resource isolation.

VPS LLM inference offers dedicated resources and runs without a graphical interface, making it ideal for deploying large language models and APIs. RDP for AI focuses more on ease of use, GUI access, and experimentation rather than high-scale production workloads.

Yes, GPU hosting becomes essential when training deep learning or large language models. CPUs struggle with parallel computations, while GPUs significantly reduce training time. For serious AI work, VPS GPU hosting or dedicated AI servers deliver far better efficiency and reliability.

You should switch to a VPS when your AI models grow larger, training time increases, or you need consistent performance for deployment. Many users start with RDP for machine learning and move to an AI cloud VPS once their project reaches production scale.